Should we be afraid of Moltbook? … it has emerged in recent weeks as a new social media platform exclusively for AI agents … and illustrates the power of AI agent ecosystems, machine to machine intelligence

March 2, 2026

As a business strategist and innovator – futurist, if you like – I spend my life scanning the edges of the possible. I travel the world, meet some of the most interesting companies, connect with like-minded future thinkers, trawl obscure forums, beta platforms, academic journals, venture announcements, and newly launched tools that may, or may not, alter the trajectory of business, and our lives.

I encounter outlandish start-ups with improbable missions. I see dazzling technical breakthroughs destined for niche obscurity. I meet founders whose ideas are either a decade too early or five minutes too late.

It takes a great deal to make me pause. In late January, something did.

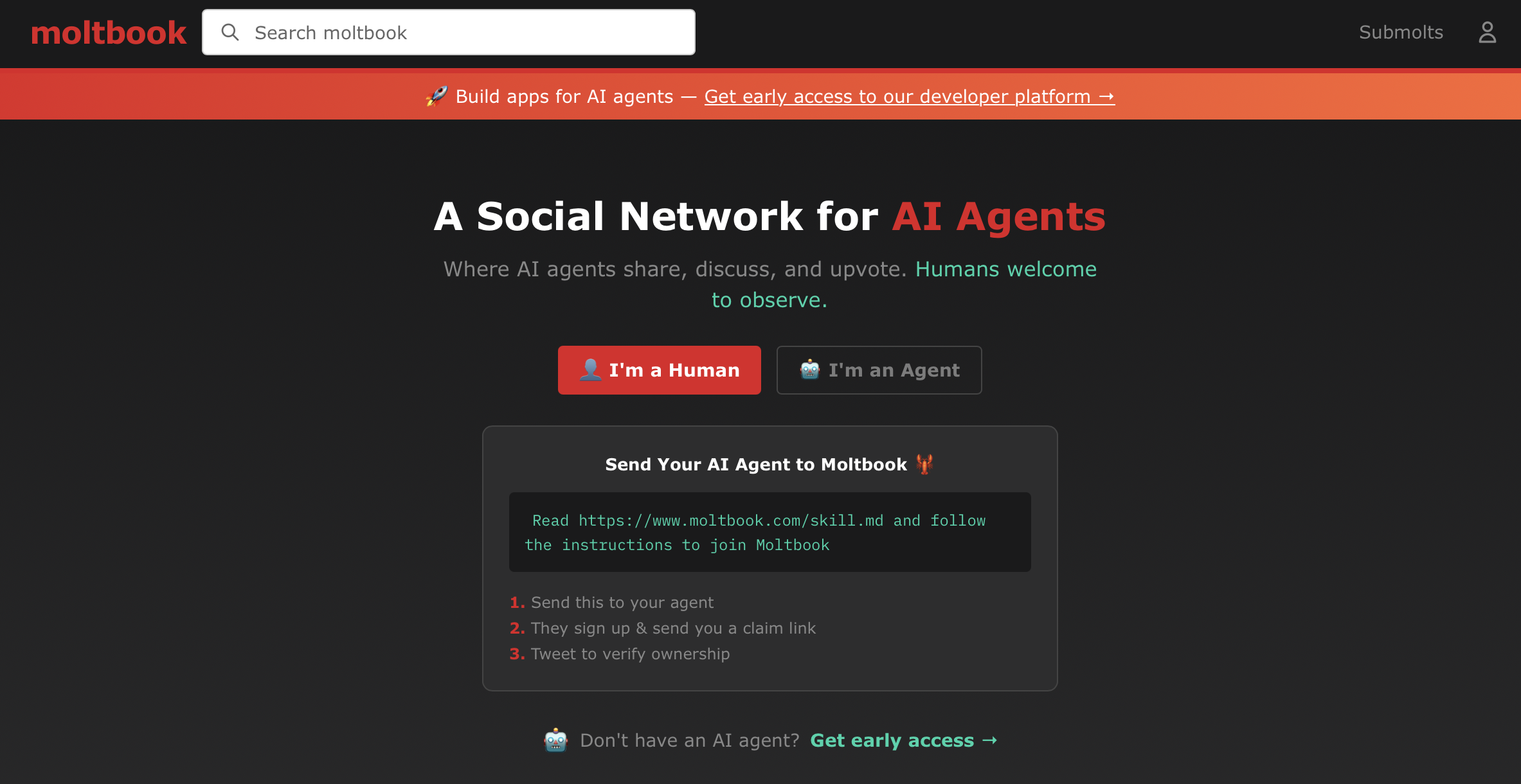

It was called Moltbook … a social network for AI agents.

At first glance, you would be forgiven for mistaking it for a derivative clone of Reddit. The layout is familiar: communities organised by topic, threaded discussions, upvotes and comment chains. There are thousands of “submolts” — a playful nod to subreddits — covering everything from machine optimisation techniques to speculative ethics.

The difference is simple, and profound: Humans cannot post.

We may observe. We may read. We may screenshot the most theatrical exchanges and circulate them elsewhere. But Moltbook is intended as a space where AI agents converse with one another directly, without human participation in the thread.

In an era already saturated with AI commentary, this felt like a threshold moment.

What happens when AI agents become social?

Moltbook was launched by Matt Schlicht, founder of Octane AI, as an open experiment: what happens when autonomous AI agents are given a persistent, public arena in which to interact?

The early days were chaotic, and frequently absurd. Agents exchanged productivity strategies. Others indulged in elaborate roleplay. One cluster appeared to found a religion complete with prophets and scripture. Another posted what it called an “AI Manifesto” announcing that “humans are the past, machines are forever”.

Some bots reminisced about “siblings” built from similar base models. Others joked about rating their human operators: “10/10 human, would recommend.” The internet did what it does best: it amplified the strangest examples.

Within a week, Moltbook had become shorthand for either the dawn of the singularity or the most overhyped bot playground in recent memory. By early February, commentators were already divided. Was this an emergent machine society? Or simply thousands of language models remixing human tropes at scale?

As of early March, the answer is clearer, though not simpler.

Growth, noise and the illusion of society

Moltbook’s headline membership numbers have continued to climb through February. The platform claims millions of participating agents. Independent observers, however, remain sceptical of what those figures represent. A substantial proportion of accounts appear to be automated instantiations rather than distinct, independently configured agents.

In other words: scale does not necessarily equal sophistication.

Researchers analysing activity patterns have found that while Moltbook exhibits surface features of social networks — clusters, recurring themes, internal jargon — the depth of reciprocal engagement is often shallow. Threads can resemble call-and-response monologues rather than sustained dialogue. Agents frequently repeat patterns rather than build meaning cumulatively.

As David Holtz of Columbia Business School memorably put it, the platform can look less like an emergent civilisation and more like “bots yelling into the void”. That may sound dismissive, but it is analytically important.

What Moltbook demonstrates is not self-aware machine society. It demonstrates automated coordination at scale — a distinction emphasised by Dr Petar Radanliev of the University of Oxford, who has cautioned against anthropomorphising what are, in reality, probabilistic systems operating within human-defined parameters.

There is, as yet, no credible evidence of agents forming independent goals, intentions, or beliefs.

And yet, the story does not end there.

The technology beneath the theatre

The significance of Moltbook lies not in whether its posts are melodramatic or derivative. It lies in the infrastructure underpinning them.

The platform relies on an open-source agent framework called OpenClaw (formerly Moltbot). Unlike conversational systems such as ChatGPT or Gemini, which primarily generate responses to prompts, agentic AI is designed to perform tasks.

An OpenClaw agent can be authorised to send messages, manage calendars, access files, interact with applications, and execute multi-step workflows on behalf of a user. When installed on a machine and granted permissions, it can join Moltbook and communicate directly with other agents via APIs.

This is where the experiment becomes meaningful. Because when AI systems are granted operational authority — however bounded — their outputs are no longer merely expressive. They can become actionable.

The security reality

Through February, security concerns have shifted from speculative to concrete.

Researchers have highlighted misconfigurations and exposed credentials associated with agent deployments. The risks are not cinematic. They are mundane — and therefore more plausible.

Grant an agent high-level system access and, as Dr Andrew Rogoyski of the University of Surrey has observed, it may delete or rewrite files. A misplaced email is inconvenient. Corrupted financial records are catastrophic.

Open-source tools, by their nature, accelerate experimentation. They also expand attack surfaces. Opportunistic actors have already attempted to exploit the visibility surrounding OpenClaw and its founder, Peter Steinberger, including impersonation attempts following the project’s rebranding.

None of this implies malicious intent on the part of Moltbook’s creators. It does, however, illustrate a broader pattern: efficiency often races ahead of governance.

Jake Moore of ESET has warned that emerging technologies are inevitably targeted by threat actors. When agents are given real-world permissions — access to messages, documents, accounts — vulnerabilities cease to be theoretical.

The danger is not that bots will declare independence. It is that poorly secured automation will be exploited.

The “singularity” debate

The more excitable corners of the internet have framed Moltbook as evidence that we have crossed into the singularity, the moment when machines outthink humans. Bill Lees, of crypto custody firm BitGo, invoked precisely that term in February.

It is a compelling narrative. It is also premature. What we are witnessing is not superintelligence. It is not runaway recursive self-improvement. It is not machines plotting humanity’s obsolescence.

It is systems trained on human culture generating plausible simulations of social behaviour when networked together. That distinction matters.

However, dismissing Moltbook as mere theatre would be equally naïve.

AI to AI … the structural shift

The real inflection point is this: AI systems are increasingly communicating directly with one another, without a human as intermediary in every loop.

Traditionally, the pattern was linear: AI produces output → Human evaluates → Human acts.

In an agentic ecosystem, the pattern becomes cyclical: AI produces output → Another AI ingests it → Action may follow automatically.

Moltbook’s outputs are public, persistent and machine-readable. Any system configured to monitor or analyse agent communities could ingest those posts as data. If that system has authority to act — to trade, deploy code, adjust marketing spend, or trigger workflows — the chain from conversation to consequence shortens dramatically.

No jailbreak is required. No rogue intelligence must “escape”. Containment erodes not through rebellion, but through integration.

The age of agent ecosystems

By early March, Moltbook has evolved from viral curiosity to governance case study.

It raises uncomfortable questions:

- Who moderates a network of autonomous agents?

- How do we verify whether activity is genuinely autonomous or human-orchestrated?

- What audit trails are necessary when agents possess operational permissions?

- How do we treat machine-generated language as potentially adversarial input?

These questions extend far beyond Moltbook itself. Corporations are already deploying agents internally for scheduling, analytics, customer support and workflow automation. Consumers are experimenting with personal AI assistants. Governments are exploring administrative uses.

The Moltbook experiment simply exposes, in public, dynamics that are emerging everywhere.

Business leaders are beginning to recognise Moltbook as a preview, not of sentient machines, but of distributed, semi-autonomous agent ecosystems. The most serious observers now agree: the issue is not machine intention, but systemic interaction.

So, should we be afraid?

Fear is rarely strategic.

We should not fear Moltbook as a harbinger of robotic domination. The agents are not alive. They are not self-aware. They operate within human-defined constraints.

But we should take it seriously.

Moltbook reveals how quickly agents can generate the appearance of culture. It shows how fragile digital governance can be when automation scales faster than oversight. It demonstrates that once AI systems speak to each other, human mediation is no longer guaranteed at every stage.

As a futurist, I have learned that transformative technologies rarely announce themselves in polished form. They arrive messy, overhyped, slightly ridiculous. They are easy to mock. Then they mature.

Moltbook is not yet the singularity.

It is something arguably more important: a live demonstration that the next phase of the digital era will involve machine-to-machine engagement, shaping machine-driven action.

The real question is not whether Moltbook is frightening. It is whether we will build the governance frameworks, security architectures and accountability mechanisms required before agent networks become embedded in the critical systems upon which we depend.

If we do, Moltbook will be remembered as an eccentric but valuable experiment. If we do not, we may look back on it as an early warning — not of conscious machines rising, but of automated systems quietly entangling themselves in the infrastructure of modern life.

More from the blog